Computer Intelligence has been in hot debate since the 1950s when Alan Turing invented the Turing Test. The argument over the years has taken two forms: strong AI versus weak AI. That is, strong AI (Artificial Intelligence) hypothesizes that some forms of artificial intelligence can truly reason and solve problems, with computers having an element of self-awareness, but not necessarily exhibiting human-like thought processes. While Weak AI argues that computers can only appear to think and are not conscious in the same way as human brains are.

Here is the article to explain, Computer Intelligence Debate Technology Essay!

These areas of thinking cause fundamental questions to arise, such as:

‘Can a man-made artifact be conscious?’ and ‘What constitutes consciousness?’

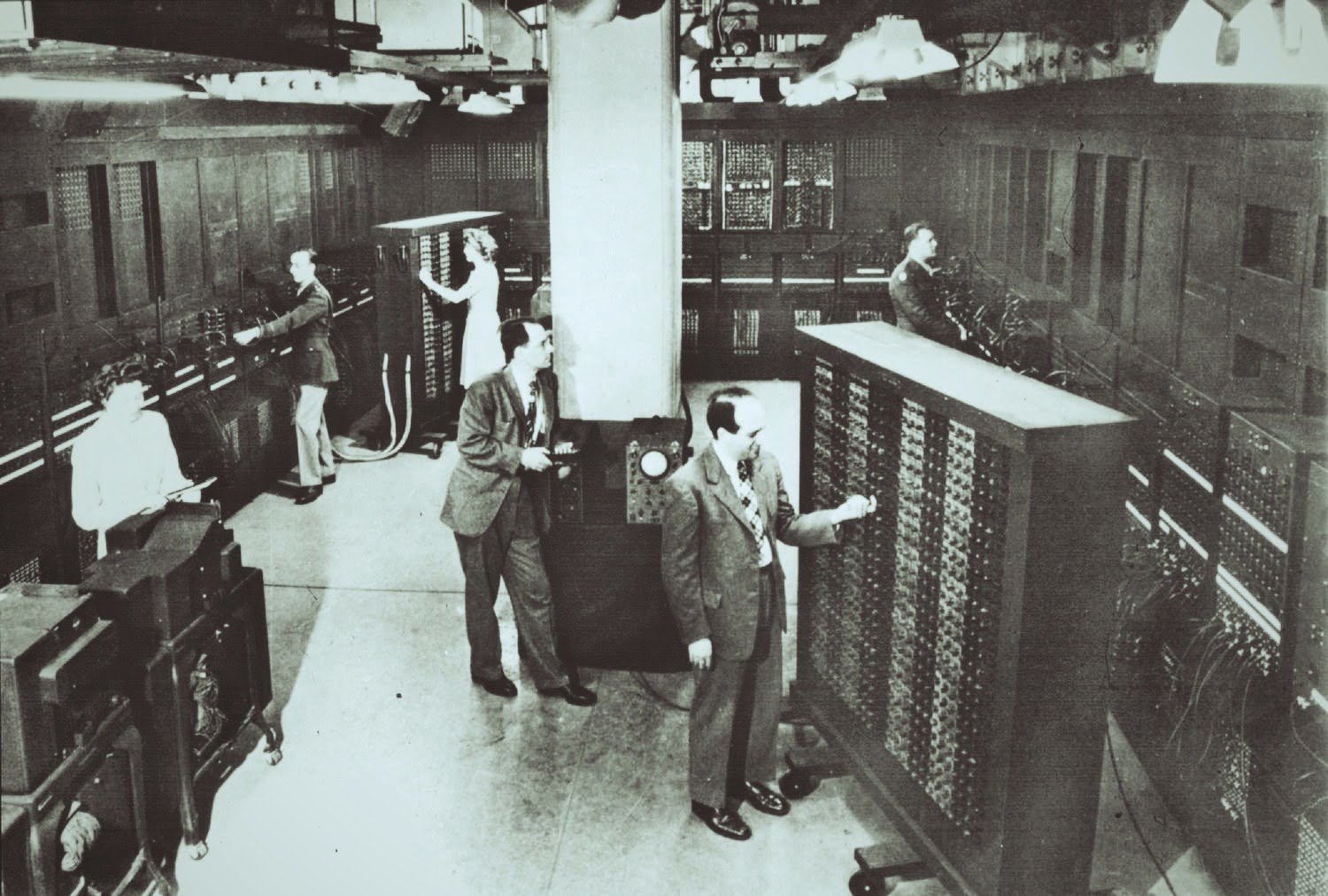

Turing’s 1948 and 1950 papers followed the construction of universal logical computing machines, introducing the prospect that computers could program to execute tasks that would call intelligent when performed by humans. Turing’s idea was to create an imitation game on which to base the concept of a computer having its intelligence. A man(A), and a woman (B), separate from an interrogator; who has to decipher who is the man and who is the woman. A’s objective is to trick the interrogator; while B tries to help the interrogator in discovering the identities of the other two players. Turing asks the question:

‘What will happen when a machine takes the part of A in this game?’ Will the interrogator decide wrongly as often when the game is played like this as he does when the game is played between a man and a woman?’

Essay Part 01;

Turing’s test offered a simple means test for computer intelligence; one that neatly avoided dealing with the mind-body problem. The fact that Turing’s test did not introduce variables and existed conducted in a controlled environment were just some of its shortfalls. Robert French, in his evaluation of the test in 1996, stated the following; ‘The philosophical claim translates elegantly into an operational definition of intelligence; whatever acts sufficiently intelligent is intelligent.

However, as he perceived, the test failed to explore the fundamental areas of human cognition and could pass ‘only by things that have experienced the world as we have experienced it. He thus concluded that ‘the Test provides a guarantee, not of intelligence but culturally-oriented human intelligence’. Turing postulated that a machine would one day create to pass his test and would thus consider intelligent.

However, as years of research have explored the complexities of the human brain, the pioneer scientists who promoted the idea of the ‘electronic brain’ have had to re-scale their ideals to create machines that assist human activity rather than challenge or equal our intelligence.

Essay Part 02;

John Searle, in his 1980 Chinese Room experiment argued that a computer could not attribute with the intelligence of a human brain as the processes were too different. In an interview he describes his original experiment:

Just imagine that you’re the computer, and you’re carrying out the steps in a program for something you don’t understand. I don’t understand Chinese, so I imagine I’m locked in a room shuffling Chinese symbols according to a computer program; and, I can give the right answers to the right questions in Chinese, but all the same, I don’t understand Chinese.

All I’m doing is shuffling symbols. And now, and this is the crucial point; if I don’t understand Chinese based on implementing the program for understanding Chinese, then neither does any other digital computer on that basis because no computer’s got anything I don’t have.

Essay Part 03;

John Searle does not believe that consciousness can reproduce to an equivalent of the human capacity. Instead, it is the biological processes that are responsible for our unique make-up. He says that ‘consciousness is a biological phenomenon like any other; and, ultimately our understanding out it is most likely to come through biological investigation’. Considered this way it is indeed far-fetched to think that the product of millions of years of biological adaptation can be equal by the product of a few decades of human thinking.

John McCarthy, Professor Emeritus of Computer Science at Stanford University advocates the potential for computational systems to reproduce a state of consciousness, viewing the latter as an ‘abstract phenomenon, currently best realized in biology,’ but arguing that consciousness can realize by ‘causal systems of the right structure.’ The famous defeat of Garry Kasparov, the world chess champion, in 1997 by IBM’s computer, Deep Blue, promoted a flurry of debate about whether Deep Blue could consider intelligent.

Essay Part 04;

When asked for his opinion, Herbert Simon, a Carnegie Mellon psychology professor; who helped originate the fields of AI and computer chess in the 1950s, said it depended on the definition of intelligence used. AI uses two definitions for intelligence: “What are the tasks, which when done by humans, lead us to impute intelligence?” and “What are the processes humans use to act intelligently?” Measured against the first definition, Simon says, Deep Blue “certainly is intelligent. According to the second definition he claims it partly qualifies.

The trouble with the latter definition of intelligence is that scientists don’t as yet know exactly what mechanisms constitute consciousness. John McCarthy, Emeritus professor at Stanford University explains that intelligence is the ‘computational part of the ability to attain goals in the world’. He emphasizes that problems in AI arise as; we cannot yet characterize in general what computational procedures we want to call intelligent. To date, computers can perform a good understanding of specific mechanisms through the running of certain programs; which McCarthy deems ‘somewhat intelligent.’

Essay Part 04;

Computing language has made leaps and bounds during the last century, from the first machine code to mnemonic ’words’ In the ’90s the so-called high-level languages were the type used for programming, with Fortran being the first compiler language. Considering the rapid progress of computer technology since it first began over a hundred years ago; unforeseeable developments will likely occur over the next decade. A simulation of the human imagination might go a long way to convincing people of computer intelligence.

However, many believe that it is unlikely that a machine will ever equal the intelligence of the being who created it. Arguably it is the way that computers process information and the speed with; which they do it that constitutes its intelligence, thus causing computer performance to appear more impressive than it is. Programs trace pathways at an amazing rate; for example, each move in a game of chess, or each section of a maze can complete almost instantly.

Essay Part 05;

Yet the relatively simple process – of trying each potential path – fails to impress once it’s realized. Thus, the intelligence is not in the computer, but in the program. For practical purposes, and certainly, in the business world, the answer seems to be that if it seems to be intelligent; it doesn’t matter whether it is. However, computational research will have a difficult task to explore the simulation of, or emulation of, the areas of human cognition.

Research continues into the relationship between the mathematical descriptions of human thought and computer thought, hoping to create an identical form. Yet the limits of computer intelligence are still very much at the surface of the technology. In contrast, the flexibility of the human imagination that creates the computer can have little or no limitations. What does this mean for computer intelligence? It means that scientists need to go beyond the mechanisms of the human psyche, and perhaps beyond programming; if they are to identify a type of machine consciousness that would correlate with that of a human.

References; Debate on Computer Intelligence. Retrieved from https://www.ukessays.com/essays/technology/are-computers-really-intelligent.php?vref=1

Leave a Reply